Predictions: People & Process Trends – 2014

RFG Perspective: The global economic headwinds in 2014, which constrain IT budgets, will force business and IT executives to more closely examine the people and process issues for productivity improvements. Externally IT executives will have to work with non-IT teams to improve and restructure processes to meet the new mobile/social environments that demand more collaborative and interactive real-time information. Simultaneously, IT executives will have to address the data quality and service level concerns that impact business outcomes, productivity and revenues so that there is more confidence in IT. Internally IT executives will need to increase their focus on automation, operations simplicity, and security so that IT can deliver more (again) at lower cost while better protecting the organization from cybercrimes.

As mentioned in the RFG blog "IT and the Global Economy – 2014" the global economic environment may not be as strong as expected, thereby keeping IT budgets contained or shrinking. Therefore, IT executives will need to invest in process improvements to help contain costs, enhance compliance, minimize risks, and improve resource utilization. Below are a few key areas that RFG believes will be the major people and process improvement initiatives that will get the most attention.

Automation/simplicity – Productivity in IT operations is a requirement for data center transformation. To achieve this IT executives will be pushing vendors to deliver more automation tools and easier to use products and services. Over the past decade some IT departments have been able to improve productivity by 10 times but many lag behind. In support of this, staff must switch from a vertical and highly technical model to a horizontal one in which they will manage services layers and relationships. New learning management techniques and systems will be needed to deliver content that can be grasped intuitively. Furthermore, the demand for increased IT services without commensurate budget increases will force IT executives to pursue productivity solutions to satisfy the business side of the house. Thus, automation software, virtualization techniques, and integrated solutions that simplify operations will be attractive initiatives for many IT executives.

Business Process Management (BPM) – BPM will gain more traction as companies continue to slice costs and demand more productivity from staff. Executives will look for BPM solutions that will automate redundant processes, enable them to get to the data they require, and/or allow them to respond to rapid-fire business changes within (and external to) their organizations. In healthcare in particular this will become a major thrust as the industry needs to move toward "pay for outcomes" and away from "pay for service" mentality.

Chargebacks – The movement to cloud computing is creating an environment that is conducive to implementation of chargebacks. The financial losers in this game will continue to resist but the momentum is turning against them. RFG expects more IT executives to be able to implement financially-meaningful chargebacks that enable business executives to better understand what the funds pay for and therefore better allocate IT resources, thereby optimizing expenditures. However, while chargebacks are gaining momentum across all industries, there is still a long way to go, especially for in-house clouds, systems and solutions.

Compliance – Thousands of new regulations took effect on January 1, as happens every year, making compliance even tougher. In 2014 the Affordable Care Act (aka Obamacare) kicked in for some companies but not others; compounding this, the U.S. President and his Health and Human Services (HHS) department keep issuing modifications to the law, which impact compliance and compliance reporting. IT executives will be hard pressed to keep up with compliance requirements globally and to improve users' support for compliance.

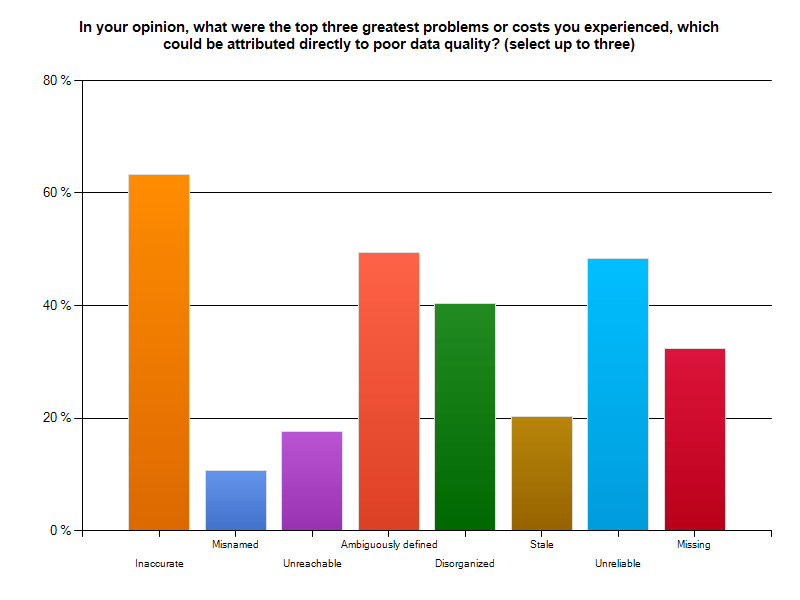

Data quality – A recent study by RFG and Principal Consulting on the negative business outcomes of poor data quality finds a majority of users find data quality suspect. Most respondents believed inaccurate, unreliable, ambiguously defined, and disorganized data were the leading problems to be corrected. This will be partially addressed in 2014 by some users by looking at data confidence levels in association with the type and use of the data. IT must fix this problem if it is to regain trust. But it is not just an IT problem as it is costing companies dearly, in some cases more than 10 percent of revenues. Some IT executives will begin to capture the metrics required to build a business case to fix this while others will implement data quality solutions aimed at fixing select problems that have been determined to be troublesome.

Operations efficiency – This will be an overriding theme for many IT operations units. As has been the case over the years the factors driving improvement will be automation, standardization, and consolidation along with virtualization. However, for this to become mainstream, IT executives will need to know and monitor the key data center metrics, which for many will remain a challenge despite all the tools on the market. Look for minor advances in usage but major double-digit gains for those addressing operations efficiency.

Procurement – With the requirement for agility and the move towards cloud computing, more attention will be paid to the procurement process and supplier relationship management in 2014. Business and IT executives that emphasize a focus on these areas can reduce acquisition costs by double digits and improve flexibility and outcomes.

Security – The use of big data analytics and more collaboration will help improve real-time analysis but security issues will still be evident in 2014. RFG expects the fallout from the Target and probable Obamacare breaches will fuel the fears of identity theft exposures and impair ecommerce growth. Furthermore, electronic health and medical records in the cloud will require considerable security protections to minimize medical ID theft and payment of HIPAA and other penalties by SaaS and other providers. Not all providers will succeed and major breaches will occur.

Staffing – IT executives will do limited hiring again this year and will rely more on cloud services, consulting, and outsourcing services. There will be some shifts on suppliers and resource country-pool usage as advanced cloud offerings, geopolitical changes and economic factors drive IT executives to select alternative solutions.

Standardization –More and more IT executives recognize the need for standardization but advancement will require a continued executive push and involvement. In that this will become political, most new initiatives will be the result of the desire for cloud computing rather than internal leadership.

SLAs – Most IT executives and cloud providers have yet to provide the service levels businesses are demanding. More and better SLAs, especially for cloud platforms, are required. IT executives should push providers (and themselves) for SLAs covering availability, accountability, compliance, performance, resiliency, and security. Companies that address these issues will be the winners in 2014.

Watson – The IBM Watson cognitive system is still at the beginning of the acceptance curve but IBM is opening up Watson for developers to create own applications. 2014 might be a breakout year, starting a new wave of cognitive systems that will transform how people and organizations think, act, and operate.

RFG POV: 2014 will likely be a less daunting year for IT executives but people and process issues will have to be addressed if IT executives hope to achieve their goals for the year. This will require IT to integrate itself with the business and work collaboratively to enhance operations and innovate new, simpler approaches to doing business. Additionally, IT executives will need to invest in process improvements to help contain costs, enhance compliance, minimize risks, and improve resource utilization. IT executives should collaborate with business and financial executives so that IT budgets and plans are integrated with the business and remain so throughout the year.

IT and the Global Economy – 2014

RFG Perspective: There will be a number of global economic headwinds in 2014 that will mean slow or no growth around the world. The U.S. could creep up to three percent growth but the Affordable Care Act (Obamacare) implementation has a high probability of reducing growth to the 2013 level or less. This uncertainty will result in IT budgets remaining constrained and making it difficult for IT executives to keep current in technology, meet new business demands, and develop the skills necessary to satisfy corporate requirements.

Third quarter U.S. GDP gives the illusion that the U.S. economy is strengthening but that is hardly the case. The gains were in inventory buildups. Remove that and the economy of the United States mirrors that of many other countries. Europe remains weak and bounces in and out of recession while many of the so-called emerging markets are no longer bounding ahead. The BRIC nations (Brazil, Russia, India, China), whose growth had offset the weakness in the developed nations, are now underperforming. Growth in Brazil, India, and Russia has dropped significantly from the peak while China's merely slipped into more normal numbers. Now that the U.S. Federal Reserve has begun its taper, these nations could tumble even more. This does not bode well for revenue growth, which, in turn, means tighter IT budgets.

In addition to the Federal Reserve's actions overhanging the U.S. and global markets, Obamacare may add to the negative effect. The Affordable Care Act (aka Obamacare) is not that affordable and it seems the majority of individuals (and potentially corporations) are finding monthly payments are significantly higher, as are deductibles. This could slow the general economy even more if consumers and corporations are forced to hold back spending to cover basic healthcare costs.

The Bellwethers Struggle

There are three IT bellwethers for growth that we can look at to see how the world economy is fairing and how it is already impacting IT acquisitions. Some may say these companies – Cisco Systems Inc., Hewlett-Packard Co. (HP), and IBM Corp. – are no longer applicable in the new world of cloud computing but that is a false premise. These three firms are all heavily into the cloud and are growing rapidly in cloud/Internet related areas.

Cisco reported single digit revenue growth for 2013 year-over-year with revenues in the Asia Pacific area shrinking by three percent. While that is not bad, CEO John Chambers warned that revenues would decline eight to 10 percent in this quarter – its biggest drop in 13 years. One reason is that it is struggling in the top five emerging markets where revenues declined 21 percent. Brazil was down 25 percent; China, India and Mexico dropped 18 percent; and Russia slid 30 percent.

HP's fiscal year 2013 showed similar revenue results – down by single digits. It had lower revenues in all regions and printing supplies slip four percent year-over-year. Printing supplies has been one of HP's internal leading economic indicators, so this news is not good.

IBM's third quarter revenues came in four percent under the previous year's quarter, with all geographies down slightly or flat. But its growth markets revenues fell by nine percent and the BRIC revenues declined by 15 percent. There is a pattern here.

The collapse of the revenues in the emerging markets and BRIC nations is less a story of the bellwethers but of the countries' declining economies. These countries and the U.S. were the engines of growth. Not any longer.

RFG POV: 2014 has the appearance of being a less daunting year for IT executives than the past few years but economic, geopolitical and governmental disruptions could change all that almost overnight. Businesses may be able to avoid the global minefields that are lurking everywhere but the risk exposure is there. Therefore, it is highly likely that most CEOs and CFOs will want to constrain IT spending – i.e., flat, down or up slightly. Moreover, most budgets are reflections of the prior year's budget with modifications to address the changing business requirements and economic environment. Therefore, IT executives can expect to have limited options as they work to meet new business demands, keep up with technology, and develop the skills needed to satisfy corporate requirements. It is time to innovate, do more with less again, and/or find self-funding solutions. Additionally, IT executives will need to invest in process improvements to help contain costs, enhance compliance, minimize risks, and improve resource utilization. IT executives should work closely with business and financial executives so that IT budgets and plans are integrated with the business and remain so throughout the year.

Major Advances in BPM and ERP

RFG Perspective: Business executives in small- and medium-sized (SMBs) as well as those in rapidly-changing large organizations can be at a disadvantage compared to their counterparts in relatively staid organizations. They must juggle a myriad of challenges, oftentimes without automated processes, usually because traditional ERP solutions either cannot be modified easily or the price point is prohibitive. These executives need business process management (BPM) and/or enterprise resource planning (ERP) solutions that will automate redundant processes, enable them to get to the data they require, and/or allow them to respond to rapid-fire business changes within (and external to) their organizations.

At the 2013 JRocket Marketing Analyst Road Show in Boston, Massachusetts three innovative disruptive technology vendors made announcements that can enable business executives in optimizing their business processes. These game-changing vendors are:

• Apparancy, the sister company of Corefino and powered by Corefino's 500 plus pre-built cloud-based Software-as-a-Service (SaaS) process framework, made its debut. Apparancy BPM solutions will initially target healthcare-related challenges faced by both enterprises and providers, and other areas in desperate need of quantum leaps in business process improvements.

• SYSPRO is transforming the manufacturing/distribution sector through its unprecedented rapidly-deployed and specialized solutions for industry micro-verticals both on-premise and in the cloud.

• UNIT4 is a global ERP solution provider that is expanding its offerings to Businesses Living IN Change (BLINC) ™; businesses that are changing rapidly due to mergers and acquisitions, global expansion, compliance, reorganization, etc.).

Apparancy

Today, executives must transform themselves into business process visionaries to guide their organizations into a sustainable and thriving future. Executives across the enterprise and in particular in the administrative side of healthcare, spend inordinate amounts of time on redundant and repetitive processes, distracting them from the real work at hand, which costs their organizations millions of dollars annually.

Market newcomer, Apparancy delivers BPM expertise to vendors with an automated, compliance-centric, and holistic business process workflow framework. Apparancy's previously introduced cloud-based sister company Corefino, has already proven that its 500-plus process framework can save organizations from 25 to 50 percent or more over costs attached to their current workflow frameworks.

Apparancy customers get pre-built workflows to solve specific issues, such as compliance to Affordable Care Act (ACA) mandates, in a platform that sits on top of existing data systems, and that can then be continuously (and easily) updated and modified. The cloud-based SaaS model is proven (based on the five-year experiences of sister company Corefino) to support legal compliance while delivering substantial measurable ROI.

Apparancy's workflow platform minimizes and simplifies state-, federal-, and industry- mandated compliance. The framework vets data and marries systems of record with systems of engagement to make business processes accurate and auditable. In essence, the Apparancy solution enables the any device, anywhere, anytime paradigm to be applied to pre-configured business process workflows – an industry first.

Executives must be able to confidently manage, sustain, and grow their organizations well into the future –as well as remain compliant. Apparancy can provide these organizations with the information they need anytime, anywhere as well as the detailed-as-necessary visibility into internal workflows without having to increase talent acquisition. Enterprise human resources (HR) executives and healthcare providers dealing with new legislation are key areas under extreme stress for which Apparancy will provide much-needed support.

SYSPRO

In a super-sized world mid-market business executives have learned that "bigger is not always better." The answer to complex business problems is not a larger, more complex ERP solution. Moreover, one size does not fit all. This is especially true in manufacturing and distribution, in which consolidation, outsourcing, and off-shoring have become de rigueur. In addition, regulations change continuously and large retailer organizations often define the standards which SMB manufacturers/distributers must follow. This has become increasingly more challenging, driving many out of business.

For business executives to respond to change with agility as well as grow their businesses, they require an ERP vendor with solutions that go beyond simply targeting the manufacturing and distribution verticals. They need a vendor solution that drills down into the business, finance, technology, and regulatory challenges of specific micro-verticals, such as food and beverage, medical devices, electronics, or machinery and equipment.

At the 2013 Analyst Road Show, SYSPRO, a best-of-breed ERP solution for SMB manufacturers/distributers, announced the SYSPRO USA BRAIN BOOST program, part of the U.S. team's successful "Einstein" market strategy. The four-point initiative continues to deliver on SYSPRO's 35–year legacy of providing standards-based technology, multi-tiered architectures, and scalability along with an agile user interface. This enables businesses executives to continuously and swiftly adapt to market, standards, and compliance fluctuations.

United States manufacturing and distribution sectors have undergone sector-shattering changes. Many have been unable to adapt and have been forced to close. To remain in the game and be continuously viable, it is paramount for manufacturing and business executives to partner with a reliable, customer-focused, and future-directed vendor.

UNIT4

Business executives in fast-changing organizations or those with highly complex financial reporting structures are often at the mercy of rigid two-dimensional systems that do not allow for nimble access to, and manipulation of, financial data. In addition, many of the widely-installed ERP systems are prohibitively expensive to install, maintain, and then continuously modify to allow for this kind of agility.

The promise of post-implementation business flexibility/agility from larger ERP vendors has in many cases not been fulfilled. UNIT4 has found itself in the enviable position of being the replacement product for many high-end high-cost ERP solutions that failed to meet customer needs and expectations and cost customers millions of dollars. UNIT4 is a least-cost ERP/financial solution provider that has successfully shifted its model to the cloud (without a dip in revenues). The entire acquisition and installation cost for UNIT4 software was typically the same as that of a one-year provider license for a competitor ERP solution and that is just the beginning of the savings.

At the December 2013 Analyst Road Show UNIT4 made several announcements including the launch of two financial performance products in the North American Market (Cash Flow and Financial Consolidation) and a new change-supporting in-memory analytics solution. Recently IDC, a global market intelligence firm conducted a survey sponsored by UNIT4 of 167 IDC customers surrounding ERP purchase, implementation, maintenance, upgrade, and re-implementation. Significant observations of the survey include: "UNIT4 customers spent average of 55 percent less than the general ERP community on supporting business change…UNIT4 customers also reported having to make moderate to substantial system change only 25 percent of the time for mergers and acquisitions…" compared to 64 percent of non-UNIT4 ERP customers.

It behooves business executives to take a closer look at the direct and indirect costs – as well as the business impacts – associated with making changes to their ERP systems. Executives who wish to cost-effectively leverage their ERP systems should consider comparing the total cost of ownership (TCO) and return on investment (ROI) of their existing solution to that of an alternative ERP vendor.

Summary

The December 2013 Analyst Road Show showcased three disruptive technology vendors with three different foci: Apparancy is poised to have a significant positive and indispensible impact on the healthcare sector because it will provide healthcare executives (and enterprises conforming to new healthcare legislation) with a SaaS-deployed, streamlined, and cost-conscious solution. SYSPRO continues to be the champion of customized and quickly deployable ERP solutions for SMB manufacturers and distributers. UNIT4 solutions are designed to enable executives to embrace business change – simply, quickly, and cost-effectively.

RFG POV: All disruptive technology vendors herein are primed to enable their customers to not only remain viable and be competitive, but to experience sustained revenue growth. Business executives, whether across the enterprise, in healthcare, SMB manufacturing/distribution, or larger but fast-changing organizations must look beyond solving today's business and IT problems. They must look to the future and be able to predict as well as respond nimbly and effectively to financial, market, and policy changes – well into the next two decades. Executives who possess business acumen should select long-term trusted vendor partners that will enable them to not only respond agilely to change but to do so faster than their competitors.

Blog written by Ms. Maria DeGiglio, Principal Analyst

Disrupt 2014

What did I learn (or what did you miss) at Disrupt 2014?

On Monday I attended The Robert Frances Group's (RFG) Disrupt 2014 conference in New York City. The attendees enjoyed an over-the-top lunch and several hours of interesting and informative presentations. I would probably gain weight just recounting the menu, so instead I'll review two of the presentations, and hint at a third.

1 - IT Disruptive Tech Trends and Directions

With this as the title, Cal Braunstein, CEO and Executive Director of Research for RFG, began by showing us how the pace of disruptive technology is increasing dramatically. According to AIIM, for more than 1/3 of the organizations studied, 90% of their IT spend adds no new value. It was the right start for Disrupt 2014.

IT budgets are increasing linearly, at 1-5% per year, while the data we produce is increasing exponentially. Disruption isn't just necessary, it is critical!

What is disruption? RFG defines it as industry leaders responding to the changes in customer demands and global economics by making fundamental changes in their approach to products, services, service delivery, engagement models, and the economic model on which their industry is based. You can read more about it here.

Cal focused on Storage Trends and Directions as a bellwether of disruption.

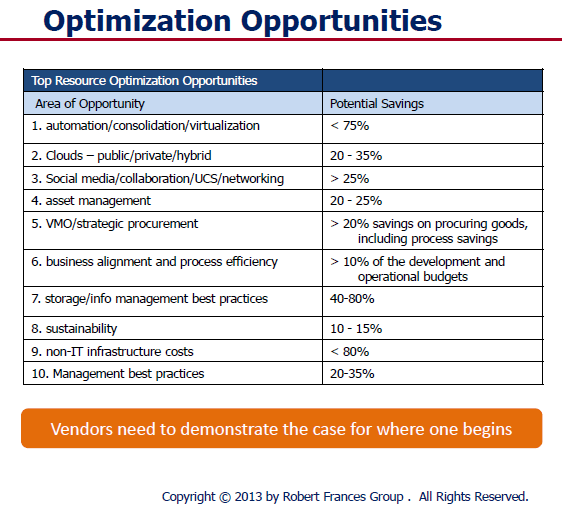

Intel conducted a study in 2012 that informed part of Cal's presentation. When looking at a data center and its technology, servers from before 2008 comprised 32% of the hardware but they consumed 60% of the data center power budget. Here is the bad news. The old gear only provided 4% of performance capacity. The point is that the technology rate of change is exponential and any IT executive that keeps IT hardware past 40 months is costing his company money. Keeping current and transforming the data center over a 3 year cycle should be viewed as a strategic approach to modernizing a data center and containing costs. The improvement in processing power vs. power consumed is truly disruptive. You could pay for a data center renewal simply by scrapping the old power burning gear. RFG has identified the following optimization opportunities, which Cal presented.

Intel conducted a study in 2012 that informed part of Cal's presentation. When looking at a data center and its technology, servers from before 2008 comprised 32% of the hardware but they consumed 60% of the data center power budget. Here is the bad news. The old gear only provided 4% of performance capacity. The point is that the technology rate of change is exponential and any IT executive that keeps IT hardware past 40 months is costing his company money. Keeping current and transforming the data center over a 3 year cycle should be viewed as a strategic approach to modernizing a data center and containing costs. The improvement in processing power vs. power consumed is truly disruptive. You could pay for a data center renewal simply by scrapping the old power burning gear. RFG has identified the following optimization opportunities, which Cal presented.

RFG has "Disrupt 2014" marching orders for IT

- IT departments must keep up with disruptive technologies

- Don't wait for next wave to become mainstream - the time to act is now

- IT vision and strategy must include waves of change

- IT needs business and user executives to share the vision, integrate & buy-in

- Data Center transformation is a must and has a very positive business case

- IT vendors must show a business case, not just talk about products & services

2 - The Value of TCO Studies

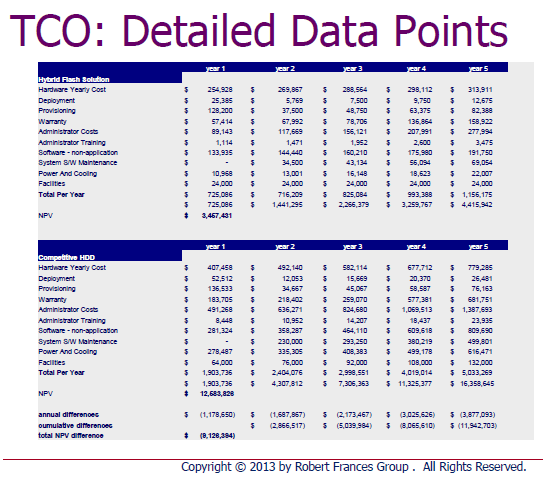

RFG Principal Analyst Gary McFadden presented on the value of Total Cost of Ownership (TCO) studies to support acquisition decisions. Gary's Disrupt 2014 thesis was straightforward. According to Gary...

RFG Principal Analyst Gary McFadden presented on the value of Total Cost of Ownership (TCO) studies to support acquisition decisions. Gary's Disrupt 2014 thesis was straightforward. According to Gary...

Businesses today are willing to invest provided they can see a decent return and a positive cost value proposition. Our TCO studies address the total cost of acquisition and ownership and the return on investment

In the RFG world, a TCO is a business-oriented custom research report that compares vendor offerings to those of their competitors. The report shows how the vendor's solution could be financially superior to traditional approaches, and considers soft-dollar aspects as well as hard costs.

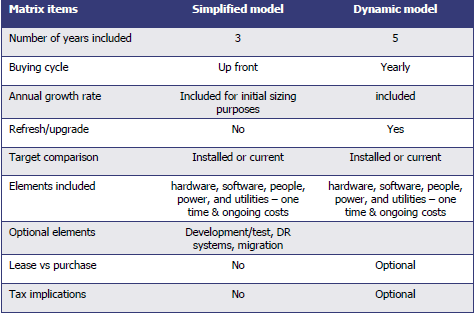

Knowing that, you should realize that all TCO studies are not created equal. Gary presented an overview of key TCO choices. Which one is right for you? Well, that depends on your needs. Your time horizon and buying cycle are chief determinants of which style of TCO would be right for you.

Making Sense of the Data

Gary correctly pointed out that no matter how comprehensive the data gathering phase of a TCO, the accumulated bulk of data is not actionable unless it is expressed in a useful manner. In the charts following, Gary contracted the details with an organized RFG presentation. The left chart holds mysteries, while the right one offers answers.

|

|

Remember that all TCOs are not created equal. Make sure that the one you pay for delivers the information you need, and the ones you use in your research follow a robust development methodology and contain the insights you require to enable your decision.

By the way, don't think RFG delivers vendor-slanted product and service stories. That is not what they are about. Instead, RFG's TCO reports are targeted at business and technology executives including CIOs, IT VPs/directors, CFOs, CMO, facilities executives, etc. They relate a business narrative designed to explain the business case for taking certain actions.

3 - Storm Insights "Mystery Presentation"

No, I can't tell you much. All I can say is that Dr. Adrian Bowles has an exciting and disruptive information services offering that will change the way IT product and services vendors learn about their markets, and the way their markets learn about them. Don't write off traditional research and advisory services yet, but stay tuned. I'll report about the Storm Insights offering as the new year unfolds. For now, take my word for it... this is very interesting!

The Bottom Line

Disrupt 2014 delivered great value and a great lunch to the appreciative attendees. RFG extended the hand of friendship and those that took hold learned much and enjoyed the sense of community that appears when like-minded professionals gather to exchange ideas. When RFG asks you to attend one of their meetings, the smart answer is "YES"!

Published by permission of Stuart Selip, Principal Consulting LLC

The Butterfly Effect of Bad Data (Part 2)

Last time... Bad Data was revealed to be pervasive and costly In the first part of this two-part post, I wrote about the truly abysmal business outcomes our survey respondents reported in our "Poor Data Quality - Negative Business Outcomes" survey. Read about it here. In writing part 1, I was stunned by the following statistic: 95% of those suffering supply chain issues noted reduced or lost savings that might have been attained from better supply chain integration. The lost savings were large, with 15% reporting missed savings of up to 20%! In this post, I'll have a look at supply chains, and how passing bad data among participants harms participants and stakeholders, and how this can cause a "butterfly effect".

Supply Chains spread the social disease of bad data

A supply chain is a community of "consenting" organizations that pass data across and among themselves to facilitate business functions such as sales, purchase ordering, inventory management, order fulfillment, and the like. The intention is that executing these business functions properly will benefit the end consumer, and all the supply chain participants.

In case the Human Social Disease Analogy is not clear...

Human social diseases spread like wildfire among communities of consenting participants. In my college days, the news that someone contracted a social disease was met with chuckles, winks, and a trip to the infirmary for a shot of penicillin. Once, a business analogy to those long-past days might have been learning that the 9-track tape you sent to a business partner had the wrong blocking factor, was encoded in ASCII instead of EBCDIC, or contained yesterday's transactions instead of today's. All of those problems were easily fixed. Just like getting a shot of penicillin. Today, we live in a different world. As we learned with AIDS, a social disease can have pervasive and devastating results for individuals and society. With a communicable disease, the inoculant has its "bad data" encoded in DNA. Where supply chains are concerned, the social disease inoculant is likely to be an XML-encoded transaction sent from one supply chain participant to another. In this case, the "bad transaction" information about the customer, product, quantity, price, terms, due date, or other critical information will simply be wrong, causing a ripple of negative consequences across the supply chain. That ripple is the Butterfly Effect.

The BUTTERFLY EFFECT

The basis of the Butterfly Effect is that a small, apparently random input to an interconnected system (like a supply chain) may have a ripple effect, the ultimate impact of which cannot be reasonably anticipated. As the phrase was constructed by Philip Merilees in back in 1972...

Does the flap of a butterfly’s wings in Brazil set off a tornado in Texas?According to Martin Christopher, in his 2005 E-Book "Logistics and Supply Chain Management", the butterfly can and will upset the supply chain.

Today's supply chains are underpinned by the exchange of information between all the entities and levels that comprise the complete end-to-end [supply chain] network... The so-called "Bullwhip" effect is the manifestation of the way that demand signals can be considerably distorted as a result of multiple steps in the chain. As a result of this distortion, the data that is used as input to planning and forecasting activities can be flawed and hence forecast accuracy is reduced and more costs are incurred. (Emphasis provided)

Supply Chains Have Learned that Bad Data is a Social Disease

Supply chains connect a network of organizations that collaborate to create and distribute a product to its consumers. Specifically, Investopedia defines a supply chain as:

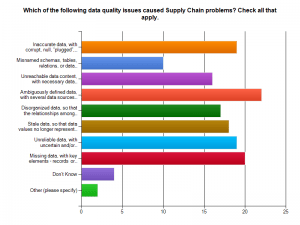

The network created amongst different companies producing, handling and/or distributing a specific product. Specifically, the supply chain encompasses the steps it takes to get a good or service from the supplier to the customerManaging the supply chain involves exchanges of data among participants. It is easy to see that exchanging bad data would disrupt the chain, adding cost, delay, and risk of ultimate delivery failure to the supply chain mix. With sufficient bad data, the value delivered by a managed supply chain would come at a higher cost and risk. Consider the graphic of supply chain management and the problems our survey respondents found in their experiences with supply chains and bad data. Click on the graphics to see them in full size.

Bad data means ambiguously defined data, missing data, and inaccurate data with corrupt or plugged values. These issues lead the list of supply chain data problems found by our survey respondents.

Would you be pleased to purchase a new car delivered with parts that do not work or break because suppliers misinterpreted part specifications? Do you remember the old 1960 era Fords whose doors let snow inside because of their poor fit? Let's not pillory Ford, as GM and Chrysler had their own quality meltdowns too. Supply chain-derived quality issues like these kill revenues and harm consumers and brands.

Would you like to drive the automobile that contained safety components ordered with missing and corrupt data? What about that artificial knee replacement you were considering? Suppose the specifications for your medical device implant had been ambiguously defined and then misinterpreted. Ready to go ahead with that surgery? Bad data is a social disease, and it could make you suffer!

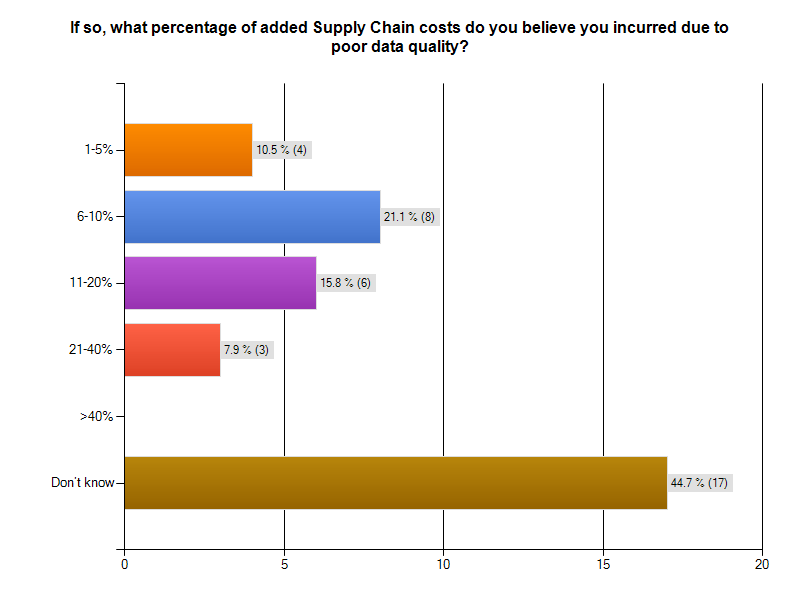

Bad Data is an Expensive Supply Chain Social Disease

Bad data is costing supply chain participants big money. As the graphic from our survey indicates, more than 20% of respondents to the Supply Chain survey segment thought that data quality problems added between 6% and 10 %. to the cost of operating their supply chain. Almost 16% said data quality problems added between 11% and 20% to supply chain operating costs. That is HUGE! The graphic following gives you survey results. Notice that 44% of the respondents could not monetize their supply chain data problems. That is a serious finding, in and of itself.

THOUGHT EXPERIMENT: CUT SUPPLY CHAIN MANAGEMENT COSTS by 20%

Over 15% of survey respondents with supply chain issues believed bad data added between 11% and 20% to the cost of operating their supply chain.Let's use 20% in our thought experiment, to yield a nice round number.

Understanding the total cost of managing a supply chain is a non-trivial exercise. Industry body The Supply Chain Council has defined The Supply Chain Operations Reference (SCOR®) model. According to that reference model, Supply Chain Management Costs include the costs to plan, source, and deliver products and services. The costs of making products and handling returns are captured in other aggregations.

For a manufacturing firm with a billion dollar revenue stream, the total costs of managing a supply chain will be around 20% of revenue, or $200,000,000 USD. Reducing this cost by 20% would mean an annual saving $40,000,000 USD. That would be a significant savings, for a data cleanup and quality sustenance investment of perhaps $3,000,000. The clean-up investment would be a one-time expense. If the $40,000,000 were a one time savings, the ROI would be 1,3333%.

But wait, it is better than that. The $40,000,000 savings recurs annually. The payback period is measured in months. The ROI is enormous. Having clean data pays big dividends!

If you think the one-time "get it right and keep it right" investment would be more like $10,000,000 USD, your payback period would still be measured in months, not years. Let's add a 20% additional cost or $2,000,000 USD in years 2-5 for maintenance and additional quality work. That means you would have spent $18,000,000 USD in 5 years to achieve a savings $200,000,000. That would be greater than a 10-times return on your money! Not too shabby an investment, and your partners and stakeholders would be saving money too. This scenario is really a win-win situation, right down the line to your customers.

The Corcardia Group believes that total supply chain costs for hospitals approach 50% of the hospital's operating budget. For a hospital with a $60,000,000 USD annual operating budget, a 20% savings means $12,000,000 USD would be freed for other uses, like curing patients and preventing illness.

Even Better...

For manufacturers, hospitals, and other supply chain participants, ridding themselves of bad data will produce still better returns. By cleaning up the data throughout the supply chain, it is likely that costs would go down while margins would improve. The product costs for participants could drop. Firms might realize an additional 5% cost savings from this as well. Their return is even better.What does the Supply Chain Community say about Data Quality?

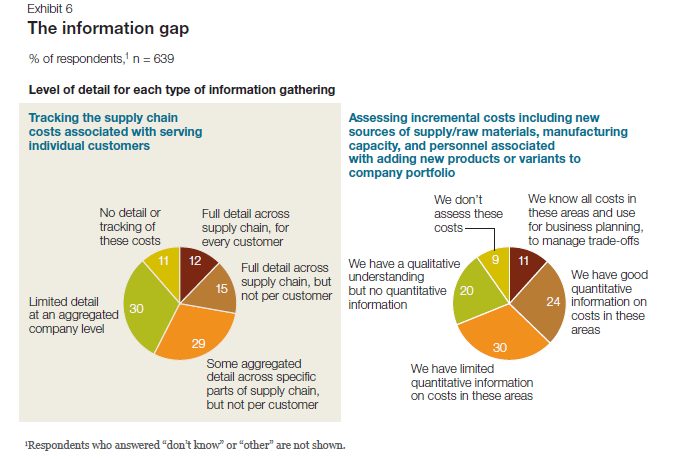

A 2011 McKinsey & Company study entitled McKinsey on Supply Chain: Selected Publications, which you can download here, the publication "The Challenges Ahead for Supply Chains" by Trish Gyorey, Matt Jochim, and Sabina Norton goes right to the heart of a supply chain's dependency on data, and the weakness of current supply chain decision-making based on that data. According to the authors:

Knowledge is power The results show a similar disconnection between data and decision making: companies seem to collect and use much less detailed information than our experience suggests is prudent in making astute supply chain decisions (Exhibit 6). For example, customer service is becoming a higher priority,and executives say their companies balance service and cost to serve effectively... Half of the executives say their companies have limited or no quantitative information about incremental costs for raw materials, manufacturing capacity, and personnel, and 41 percent do not track per-customer supply chain costs at any useful level of detail.Here is Exhibit 6 - a graphic from their study, referenced in the previous quote. Andrew White, writing about master data management for the Supply Chain Quarterly in its Q2-2013 issue, underscored the importance of data quality and consistency for supply chain participants.

... there is a growing emphasis among many organizations on knowing their customers' needs. More than this, organizations are seeking to influence the behavior of customers and prospects, guiding customers' purchasing decisions toward their own products and services and away from those of competitors. This change in focus is leading to a greater demand for and reliance on consistent data.White's take-away from this is...

...as companies' growing focus on collaboration with trading partners and their need to improve business outcomes, data consistency—especially between trading partners—is increasingly a prerequisite for improved and competitive supply chain performance. As data quality and consistency become increasingly important factors in supply chain performance, companies that want to catch up with the innovators will have to pay closer attention to master data management. That may require supply chain managers to change the way they think about and utilize data.Did everyone get that? Data Quality and Consistency are important factors in supply chain performance. You want your auto and your artificial knee joint to work properly and consistently, as their designers and builders intended. This means curing existing data social disease victims and preventing the recurrence and spread.

The Bottom Line

At this point, nearly 300 respondents have begun their surveys, and more than 200 have completed them. I urge those who have left their surveys in mid-course to complete them!

Bad data is a social disease that harms supply chain participants and stakeholders. Do take a stand on wiping it out. The simplest first step is to make your experiences known to us by visiting the IBM InfoGovernance site and taking our "Poor Data Quality - Negative Business Outcomes" survey.

When you get to the question about participating in an interview, answer "YES"and give us real examples of problems, solutions attempted, success attained, and failures sustained. Only by publicizing the magnitude and pervasiveness of this social disease will we collectively stand a chance of achieving cure and prevention.

As a follow-up next step, work with us to survey your organization in a private study that parallels our public InfoGovernance study. The public study forms a excellent baseline for us to compare and contrast the specific data quality issues within your organization. Data Quality will not be attained and sustained until your management understands the depth and breadth of the problem and its cost to your organization's bottom line.

Contact me here and let us help you build the business case for eliminating the causes of bad data. Published by permission of Stuart Selip, Principal Consulting LLC

Bad Data is a Social Disease (Part 1)

Organizational bad data is a social disease easily passed to your business partners and stakeholders With 200 completed responses in our "Poor Data Quality - Negative Business Outcomes" survey, run in conjunction with The Robert Frances Group, the IBM InfoGovernance Community, and Chaordix, it is safe to say that bad data is a social disease that can spread easily and quickly. Merriam-Webster defines a social disease as

a disease (as tuberculosis) whose incidence is directly related to social and economic factorsOK, that definition works for the bad data social disease. In this case, the social and economic factors enabling and potentiating this disease include

- Business management failing to fund and support data governance initiatives

- IT management failing to sell the value of data quality to their business colleagues,

- Business partners failing to challenge and push-back when bad data is exchanged

- Financial analysts not downgrading firms that repeatedly refile 10-Ks due to bad data

- Customers not abandoning firms that err due to bad data quality and management

Social diseases negatively affect the sufferer, their partners, and the community around them. According to our respondents:

Social diseases negatively affect the sufferer, their partners, and the community around them. According to our respondents:

- 95% of those suffering supply chain issues noted reduced or lost savings that might have been attained from better supply chain integration.

- 72% reported customer data problems, and 71% of those respondents lost business because the didn't know their customer

- 71% of those suffering financial reporting problems said poor data quality cause them to reach and act upon erroneous conclusions based upon materially faulty revenues, expenses, and/or liabilities

- 66% missed the chance to accelerate receivables collection

- 49% reported operations problems from bad data, and 87% of those respondents suffered excess costs for business operations

- 27% reported strategic planning problems, with 75% of those indicating challenges with financial records, profits and losses of units, taxes paid, capital, true customer profiles, overhead allocations, marginal costs, shareholders, etc.)

What is in Part 2?

In next week's post, we'll examine some of our survey results specific to bad data and the supply chain. A successful supply chain requires sound internal data integration and equally sound data exchange and integration across chain participants.A network of willing participants exchanging data is fertile ground for spreading the social disease of business. Expect a thought experiment about wringing the bad data quality costs out of supply chain management, and see what some supply chain experts think about the dependency of effective supply chains on high quality data.

The Bottom Line

Believe that bad data is a social disease and take a stand on wiping it out. The simplest first step is to make your experiences known to us by visiting the IBM InfoGovernance site and taking our "Poor Data Quality - Negative Business Outcomes" survey. When you get to the question about participating in an interview, answer "YES"and give us real examples of problems, solutions attempted, success attained, and failures sustained. Only by publicizing the magnitude and pervasiveness of this social disease will we collectively stand a chance of achieving cure and prevention. As a follow-up next step, work with us to survey your organization in a private study that parallels our public InfoGovernance study. The public study forms an excellent baseline for us to compare the specific data quality issues within your organization. You will not attain and sustain data quality until your management understands the depth and breadth of the problem and its cost to your organization's bottom line. Bad Data is a needless and costly social disease of business. Let's move forward swiftly and decisively to wipe it out!

Published by permission of Stuart Selip, Principal Consulting LLC

As the consulting industry changes will you be the disrupter, not the disrupted?

Will you be the disrupter, not the disrupted? This is the question that came to mind as I read Consulting on the Cusp of Disruption, by Clayton M. Christensen, Dina Wang, and Derek van Bever, in the October 2013 issue of the Harvard Business Review (HBR). With an online subscription, you can read it here. Disruption means industry leaders are responding to the changes in customer demands and global economics by making fundamental changes in their approach to services, service delivery, engagement models, and the economic model on which their industry is based.  As an example of disruption, the HBR authors open by discussing the McKinsey & Company move to develop McKinsey Solutions, an offering that is not "human-capital based", but instead focuses on technology and analytics embedded at their client. This is a significant departure for a firm known for hiring the best and the brightest, to be tasked with delivering key insights and judgement. Especially when the judgment business was doing well. The authors make the point that the consulting industry has evolved over time.

As an example of disruption, the HBR authors open by discussing the McKinsey & Company move to develop McKinsey Solutions, an offering that is not "human-capital based", but instead focuses on technology and analytics embedded at their client. This is a significant departure for a firm known for hiring the best and the brightest, to be tasked with delivering key insights and judgement. Especially when the judgment business was doing well. The authors make the point that the consulting industry has evolved over time.

Generalists have become Functional Specialists Local Structures developed into Global Structures Tightly Structured Teams morphed into spider webs of Remote Specialists

However, McKinsey Solutions was not evolutionary. In its way, it was a revolutionary breakthrough for McKinsey. While McKinsey Solutions' success meant additional revenue for the firm, and offered another means of remaining "Top of Mind" for the McKinsey Solutions' client, the move was really a first line of defense against disruption in the consulting industry. By enjoying "first mover advantage" McKinsey protected their already strong market position, and became the disrupter, not the disrupted.

However, McKinsey Solutions was not evolutionary. In its way, it was a revolutionary breakthrough for McKinsey. While McKinsey Solutions' success meant additional revenue for the firm, and offered another means of remaining "Top of Mind" for the McKinsey Solutions' client, the move was really a first line of defense against disruption in the consulting industry. By enjoying "first mover advantage" McKinsey protected their already strong market position, and became the disrupter, not the disrupted.

What is the classic pattern of disruption?

According to Christensen, et al,

New competitors with new business models arrive; incumbents choose to ignore the new players or flee to higher-margin activities; a disrupter whose product was once barely good enough achieves a level of quality acceptable to the broad middle of the market, undermining the position of longtime leaders and often causing the "flip" to a new basis of competition.Cal Braunstein, CEO of The Robert Frances Group, believes that IT needs a disruptive agenda. In his research note, Cal references the US auto industry back in the happy days when the Model "T" completely disrupted non-production line operations of competitors. But when disruption results in a workable model with entrenched incumbents, the market once again becomes ripe for disruption. That is exactly what happened to the "Big 3" US automakers when Honda and Toyota entered the US market with better quality and service at a dramatically lower price point. Disruption struck again. Detroit never recovered. The City of Detroit itself is now bankrupt. Disruption has significant consequences.

Industry leaders may suffer most from disruption

In his work "The Innovator's Solution" HBR author Clayton M. Christensen addressed the problem of incumbents becoming vulnerable to disruption, writing

The disruption problem is worse for market leaders, according to Christensen.An organization's capabilities become its disabilities when disruption is afoot.

No challenge is more difficult for a market leader facing disruption than to turn and fight back - to disrupt itself before a competitor does... Success in self-disruption requires at least the following six elements: An autonomous business unit... Leaders who come from relevant "schools of experience"... A separate resource allocation process... Independent sales channels... A new profit model... Unwavering commitment by the CEO...So, it will be tough to disrupt yourself if you are big, set in your ways, and don't have the right CEO.

Being the disrupter, not the disrupted

The HBR authors characterized three forms of offering consulting, ranging from the traditional "Solution Shop" to "Value-added Process Businesses" and then to "Facilitated Networks". The spectrum ranges from delivering pronouncements from gifted but opaque expert practitioners charging by the hour through repeatable process delivery charging for delivered results to dynamic and configurable collections of experts linked by a business network layer. In my experience, the expert network form is the most flexible, least constrained, and most likely to deliver value at an exceptional price. It is at once the most disruptive, and presently the least likely form to be destabilized by other disruptive initiatives.

The Bottom Line

If you are in the consulting industry and you don't recognize that disruptive forces are changing the industry and your market's expectations as you read this, you will surely be the disrupted, not the disrupter. On the other hand, disrupters can be expected to provide a consulting service that will deliver much more value for a much lower price point. We are talking here of more than a simple process improvement 10% gain. It will be a quantum jump. Like McKinsey, that may come from embedding in some new solution that accelerates the consulting process and cuts costs. Now is the time to develop situation awareness. What are the small independent competitors doing? Yes, the little firms that you don't really think will invade your market and displace you. Watch them carefully, and learn before it is too late. Those readers who man those small, agile, and disruptive firms should ensure they understand their prospect's pain points and dissatisfaction with the status quo. As physician Sir William Olser famously said "Listen to your patient, he is telling you his diagnosis". Do it now! >

-

If you are in the consulting industry and you don't recognize that disruptive forces are changing the industry and your market's expectations as you read this, you will surely be the disrupted, not the disrupter. On the other hand, disrupters can be expected to provide a consulting service that will deliver much more value for a much lower price point. We are talking here of more than a simple process improvement 10% gain. It will be a quantum jump. Like McKinsey, that may come from embedding in some new solution that accelerates the consulting process and cuts costs. Now is the time to develop situation awareness. What are the small independent competitors doing? Yes, the little firms that you don't really think will invade your market and displace you. Watch them carefully, and learn before it is too late. Those readers who man those small, agile, and disruptive firms should ensure they understand their prospect's pain points and dissatisfaction with the status quo. As physician Sir William Olser famously said "Listen to your patient, he is telling you his diagnosis". Do it now! >

-Dell: The Privatization Advantage

Lead Analyst: Cal Braunstein

RFG Perspective: The privatization of Dell Inc. closes a number of chapters for the company and puts it more firmly on a different course. The Dell of yesterday was primarily a consumer company with a commercial business, both with a transactional model. The new Dell is planned to be a commercial-oriented company with an interest in the consumer space. The commercial side of Dell will attempt to be relationship driven while the consumer side will retain its transactional model. The company has some solid products, channels, market share, and financials that can carry the company through the transition. However, it will take years before the new model is firmly in place and adopted by its employees and channels and competitors will not be sitting idly by. IT executives should expect Dell to pull through this and therefore should take advantage of the Dell business model and transitional opportunities as they arise.

Shareholders of IT giant Dell approved a $24.9bn privatization takeover bid from company founder and CEO Michael Dell, Silver Lake Partners, banks and a loan from Microsoft Corp. It was a hard fought battle with many twists and turns but the ownership uncertainty is now resolved. What remains an open question is was it worth it? Will the company and Michael Dell be able to change the vendor's business model and succeed in the niche that he has carved out?

Dell's New Vision

After the buyout Michael Dell spoke to analysts about his five-point plan for the new Dell:

- Extend Dell's presence in the enterprise sector through investments in research and development as well as acquisitions. Dell's enterprise solutions market is already a $25 billion business and it grew nine percent last quarter – at a time competitors struggled. According to the CEO Dell is number one in servers in the Americas and AP, ships more terabytes of storage than any competitor, and completed 1,300 mainframe migrations to Dell servers. (Worldwide IDC says Hewlett-Packard Co. (HP) is still in first place for server shipments by a hair.)

- Expand sales coverage and push more solutions through the Partner Direct channel. Dell has more than 133,000 channel partners globally, with about 4,000 certified as Preferred or Premier. Partners drive a major share of Dell's business.

- Target emerging markets. While Dell does not break out revenue numbers by geography, APJ and BRIC (Brazil, Russia, India and China) saw minor gains over the past quarter year-over-year but China was flat and Russia sales dropped by 33 percent.

- Invest in the PC market as well as in tablets and virtual computing. The company will not manufacture phones but will sell mobile solutions in other mobility areas. Interestingly, he said Dell is a commercial seller more than in the consumer space now when it comes to end user computing. This is a big shift from the old Dell and puts them in the same camp as HP. The company appears to be structuring a full-service model for commercial enterprises.

- "Accelerate an enhanced customer experience." Michael Dell stipulates that Dell will serve its customers with a single-minded purpose and drive innovations that will help them be more productive, grow, and achieve their goals.

Strengths, Weaknesses, Challenges and Competition

With the uncertainty over, Dell can now fully focus on execution of plans that were in place prior to the initial stalled buyout attempt. Financially Dell has sufficient funds to address its business needs and operates with a strong positive cash flow. Brian Gladden, Dell's CFO, said Dell was able to generate $22 billion in cash flow over the past five years and conceded the new Dell debt load would be under $20 billion. This should give the company plenty of room to maneuver.

In the last five quarters Dell has spent $5 billion in acquisitions and since 2007 when Michael Dell returned as CEO, it has paid more than $13.7 billion on acquisitions. Gladden said Dell will aim to reduce its debt, invest in enhanced and innovative product and services development, and buy other companies. However, the acquisitions will be of a "more complimentary" type rather than some of the expensive, big-bang deals Dell has done in the past.

The challenge for Dell financially will be to grow the enterprise segments faster than the end user computing markets collapse. As can be noted in the chart below, the enterprise offerings are less than 40 percent of the revenues currently and while they are growing nicely, the end user market is losing speed at a more rapid rate in terms of dollars.

Source: Dell's 2Q FY14 Performance Review

Dell also has a strong set of enterprise products and services. The server business does well and the company has positioned itself well in the hyperscale data center solution space where it has a dominant share of custom server sales. Unfortunately, margins are not as robust in that space as other parts of the server market. Moreover, the custom server market is one that fulfills the needs of cloud service providers and Dell will have to contend with "white box" providers and lower prices and shrinking margins going forward. Networking is doing well too but storage remains a soft spot. After dropping out as an EMC Corp. channel partner and betting on its own acquired storage companies, Dell lost ground and still struggles in the non-DAS space to gain the momentum needed. The mid-range EqualLogic and higher-end Compellent solutions, while good, have stiff competition and need to up their game if Dell is to become a full-service provider.

Software is growing but the base is too small at the moment. Nonetheless, this will prove to be an important sector for Dell going forward. With major acquisitions (such as Boomi, KACE, Quest Software and SonicWALL) and the top leadership of John Swainson, who has an excellent record of growing software companies, Dell software is poised to be an integral part of the new enterprise strategy. Meanwhile, its Services Group appears to be making modest gains, although its Infrastructure, Cloud, and Security services are resonating with customers. Overall, though, this needs to change if Dell is to move upstream and build relationship sales. In that the company traditionally has been transaction oriented, moving to a relationship model will be one of its major transformational initiatives. This process could easily take up to a decade before it is fully locked in and units work well together.

Michael Dell also stated "we stand on the cusp of the next technological revolution. The forces of big data, cloud, mobile, and security are changing the way people live, businesses operate, and the world works – just as the PC did almost 30 years ago." The new strategy addresses that shift but the End User Computing unit still derives most of its revenues from desktops, thin clients, software and peripherals. About 40 percent comes from mobility offerings but Dell has been losing ground here. The company will need to shore that up in order to maintain its growth and margin objectives.

While Dell transforms itself, its competitors will not be sitting still. HP is in the midst of its own makeover, has good products and market share but still suffers from morale and other challenges caused by the upheavals over the last few years. IBM Corp. maintains its version of the full-service business model but will likely take on Dell in selected markets where it can still get decent margins. Cisco Systems Inc. has been taking market share from all the server vendors and will be an aggressive challenger over the next few years as well. Hitachi Data Systems (HDS), EMC, and NetApp Inc. along with a number of smaller players will also test Dell in the non-DAS (direct attached server) market segments. It remains to be seen if Dell can fend them off and grow its revenues and market share.

Summary

Michael Dell and the management team have major challenges ahead as they attempt to change the business model, re-orient people's mindsets, develop innovative, efficient and affordable solutions, and fend off competitors while they slowly back away from the consumer market. Dell wants to be the infrastructure provider for cloud providers and enterprises of all types – "the BASF inside" in every company. It still intends to do this by becoming the top vendor of choice for end-to-end IT solutions and services. As the company still has much work to do in creating a stronger customer relationship sales process, Dell will have to walk some fine lines while it figures out how to create the best practices for its new model. Privatization enables Dell to deal with these issues more easily without public scrutiny and sniping over margins, profits, revenues and strategies.

RFG POV: Dell will not be fading away in the foreseeable future. It may not be so evident in the consumer space but in the commercial markets privatization will allow it to push harder to remain or be one of the top three providers in each of the segments it plays in. The biggest unknown is its ability to convert to a relationship management model and provide a level of service that keeps clients wanting to spend more of their IT dollars with Dell and not the competition. IT executives should be confident that Dell will remain a reliable, long-term supplier of IT hardware, software and services. Therefore, where appropriate, IT executives should consider Dell for its short list of providers for infrastructure products and services, and increasingly for software solutions related to management of big data, cloud and mobility environments.

The Future of NAND Flash; the End of Hard Disk Drives?

Lead Analyst: Gary MacFadden

RFG POV: In the relatively short and fast-paced history of data storage, the buzz around NAND Flash has never been louder, the product innovation from manufacturers and solution providers never more electric. Thanks to mega-computing trends, including analytics, big data, cloud and mobile computing, along with software-defined storage and the consumerization of IT, the demand for faster, cheaper, more reliable, manageable, higher capacity and more compact Flash has never been greater. But how long will the party last?

In this modern era of computing, the art of dispensing predictions, uncovering trends and revealing futures is de rigueur. To quote that well-known trendsetter and fashionista, Cher, "In this business, it takes time to be really good – and by that time, you're obsolete." While meant for another industry, Cher's ruminations seem just as appropriate for the data storage space.

At a time when industry pundits and Flash solution insiders are predicting the end of mass data storage as we have known it for more than 50 years, namely the mechanical hard disk drive (HDD), storage futurists, engineers and computer scientists are paving the way for the next generation of storage beyond NAND Flash – even before Flash has had a chance to become a mature, trusted, reliable, highly available and ubiquitous enterprise class solution. Perhaps we should take a breath before we trumpet the end of the HDD era or proclaim NAND Flash as the data storage savior of the moment.

Short History of Flash

Flash has been commercially available since its invention and introduction by Toshiba in the late 1980s. NAND Flash is known for being at least an order of magnitude faster than HDDs and has no moving parts (it uses non-volatile memory or NVM) and therefore requires far less power. NAND Flash is found in billions of personal devices, from mobile phones, tablets, laptops, cameras and even thumb drives (USBs) and over the last decade it has become more powerful, compact and reliable as prices have dropped, making enterprise-class Flash deployments much more attractive.

At the same time, IOPS-hungry applications such as database queries, OLTP (online transaction processing) and analytics have pushed traditional HDDs to the limit of the technology. To maintain performance measured in IOPS or read/write speeds, enterprise IT shops have employed a number of HDD workarounds such as short stroking, thin provisioning and tiering. While HDDs can still meet the performance requirements of most enterprise-class applications, organizations pay a huge penalty in additional power consumption, data center real estate (it takes 10 or more high-performance HDDs to match the same performance of the slowest enterprise-class Flash or solid-state storage drive (SSD)) and additional administrator, storage and associated equipment costs.

Flash is becoming pervasive throughout the compute cycle. It is now found on DIMM (dual inline memory module) memory cards to help solve the in-memory data persistence problem and improve latency. There are Flash cache appliances that sit between the server and a traditional storage pool to help boost access times to data residing on HDDs as well as server-side Flash or SSDs, and all-Flash arrays that fit into the SAN (storage area network) storage fabric or can even replace smaller, sub-petabyte, HDD-based SANs altogether.

There are at least three different grades of Flash drives, starting with the top-performing, longest-lasting – and most expensive – SLC (single level cell) Flash, followed by MLC (multi-level cell), which doubles the amount of data or electrical charges per cell, and even TLC for triple. As Flash manufacturers continue to push the envelope on Flash drive capacity, the individual cells have gotten smaller; now they are below 20 nm (one nanometer is a billionth of a meter) in width, or tinier than a human virus at roughly 30-50 nm.

Each cell can only hold a finite amount of charges or writes and erasures (measured in TBW, or total bytes written) before its performance starts to degrade. This program/erase, or P/E, cycle for SSDs and Flash causes the drives to wear out because the oxide layer that stores its binary data degrades with every electrical charge. However, Flash management software that utilizes striping across drives, garbage collection and wear-leveling to distribute data evenly across the drive increases longevity.

Honey, I Shrunk the Flash!

As the cells get thinner, below 20 nm, more bit errors occur. New 3D architectures announced and discussed at FMS by a number of vendors hold the promise of replacing the traditional NAND Flash floating gate architecture. Samsung, for instance, announced the availability of its 3D V-NAND, which leverages a Charge Trap Flash (CTF) technology that replaces the traditional floating gate architecture to help prevent interference between neighboring cells and improve performance, capacity and longevity.

Samsung claims the V-NAND offers an "increase of a minimum of 2X to a maximum 10X higher reliability, but also twice the write performance over conventional 10nm-class floating gate NAND flash memory." If 3D Flash proves successful, it is possible that the cells can be shrunk to the sub-2nm size, which would be equivalent to the width of a double-helix DNA strand.

Enterprise Flash Futures and Beyond

Flash appears headed for use in every part of the server and storage fabric, from DIMM to server cache and storage cache and as a replacement for HDD across the board – perhaps even as an alternative to tape backup. The advantages of Flash are many, including higher performance, smaller data center footprint and reduced power, admin and storage management software costs.

As Flash prices continue to drop concomitant with capacity increases, reliability improvements and drive longevity – which today already exceeds the longevity of mechanical-based HDD drives for the vast number of applications – the argument for Flash, or tiers of Flash (SLC, MLC, TLC), replacing HDD is compelling. The big question for NAND Flash is not: when will all Tier 1 apps be running Flash at the server and storage layers; but rather: when will Tier 2 and even archived data be stored on all-Flash solutions?

Much of the answer resides in the growing demands for speed and data accessibility as business use cases evolve to take advantage of higher compute performance capabilities. The old adage that 90%-plus of data that is more than two weeks old rarely, if ever, gets accessed no longer applies. In the healthcare ecosystem, for example, longitudinal or historical electronic patient records now go back decades, and pharmaceutical companies are required to keep clinical trial data for 50 years or more.

Pharmacological data scientists, clinical informatics specialists, hospital administrators, health insurance actuaries and a growing number of physicians regularly plumb the depths of healthcare-related Big Data that is both newly created and perhaps 30 years or more in the making. Other industries, including banking, energy, government, legal, manufacturing, retail and telecom are all deriving value from historical data mixed with other data sources, including real-time streaming data and sentiment data.

All data may not be useful or meaningful, but that hasn't stopped business users from including all potentially valuable data in their searches and their queries. More data is apparently better, and faster is almost always preferred, especially for analytics, database and OLTP applications. Even backup windows shrink, and recovery times and other batch jobs often run much faster with Flash.

What Replaces DRAM and Flash?

Meanwhile, engineers and scientists are working hard on replacements for DRAM (dynamic random-access memory) and Flash, introducing MRAM (magneto resistive), PRAM (phase-change), SRAM (static) and RRAM – among others – to the compute lexicon. RRAM or ReRAM (resistive random-access memory) could replace DRAM and Flash, which both use electrical charges to store data. RRAM uses "resistance" to store each bit of information. According to wiseGEEK "The resistance is changed using voltage and, also being a non-volatile memory type, the data remain intact even when no energy is being applied. Each component involved in switching is located in between two electrodes and the features of the memory chip are sub-microscopic. Very small increments of power are needed to store data on RRAM."

According to Wikipedia, RRAM or ReRAM "has the potential to become the front runner among other non-volatile memories. Compared to PRAM, ReRAM operates at a faster timescale (switching time can be less than 10 ns), while compared to MRAM, it has a simpler, smaller cell structure (less than 8F² MIM stack). There is a type of vertical 1D1R (one diode, one resistive switching device) integration used for crossbar memory structure to reduce the unit cell size to 4F² (F is the feature dimension). Compared to flash memory and racetrack memory, a lower voltage is sufficient and hence it can be used in low power applications."

Then there's Atomic Storage which ostensibly is a nanotechnology that IBM scientists and others are working on today. The approach is to see if it is possible to store a bit of data on a single atom. To put that in perspective, a single grain of sand contains billions of atoms. IBM is also working on Racetrack memory which is a type of non-volatile memory that holds the promise of being able to store 100 times the capacity of current SSDs.

Flash Lives Everywhere! … for Now

Just as paper and computer tape drives continue to remain relevant and useful, HDD will remain in favor for certain applications, such as sequential processing workloads or when massive, multi-petabyte data capacity is required. And lest we forget, HDD manufacturers continue to improve the speed, density and cost equation for mechanical drives. Also, 90% of data storage manufactured today is still HDD, so it will take a while for Flash to outsell HDD and even for Flash management software to reach the level of sophistication found in traditional storage management solutions.

That said, there are Flash proponents that can't wait for the changeover to happen and don't want or need Flash to reach parity with HDD on features and functionality. One of the most talked about Keynote presentations at last August's Flash Memory Summit (FMS) was given by Facebook's Jason Taylor, Ph.D., Director of Infrastructure and Capacity Engineering and Analysis. Facebook and Dr. Taylor's point of view is: "We need WORM or Cold Flash. Make the worst Flash possible – just make it dense and cheap, long writes, low endurance and lower IOPS per TB are all ok."

Other presenters, including the CEO of Violin Memory, Don Basile, and CEO Scott Dietzen of Pure Storage, made relatively bold predictions about when Flash would take over the compute world. Basile showed a 2020 Predictions slide in his deck that stated: "All active data will be in memory." Basile anticipates "everything" (all data) will be in memory within 7 years (except for archive data on disk). Meanwhile, Dietzen is an articulate advocate for all-Flash storage solutions because "hybrids (arrays with Flash and HDD) don't disrupt performance. They run at about half the speed of all-Flash arrays on I/O-bound workloads." Dietzen also suggests that with compression and data deduplication capabilities, Flash has reached or dramatically improved on cost parity with spinning disk.

Bottom Line

NAND Flash has definitively demonstrated its value for mainstream enterprise performance-centric application workloads. When, how and if Flash replaces HDD as the dominant media in the data storage stack remains to be seen. Perhaps some new technology will leapfrog over Flash and signal its demise before it has time to really mature.

For now, HDD is not going anywhere, as it represents over $30 billion of new sales in the $50-billion-plus total storage market – not to mention the enormous investment that enterprises have in spinning storage media that will not be replaced overnight. But Flash is gaining, and users and their IOPS-intensive apps want faster, cheaper, more scalable and manageable alternatives to HDD.

RFG POV: At least for the next five to seven years, Flash and its adherents can celebrate the many benefits of Flash over HDD. IT executives should recognize that for the vast majority of performance-centric workloads, Flash is much faster, lasts longer and costs less than traditional spinning disk storage. And Flash vendors already have their sights set on Tier 2 apps, such as email, and Tier 3 archival applications. Fast, reliable and inexpensive is tough to beat and for now Flash is the future.

Focusing the Health Care Lens

Lead Analyst: Maria DeGiglio

RFG Perspective: There are a myriad of components, participants, issues, and challenges that define health care in the United States today. To this end, we have identified five main components of health care: participants, regulation, cost, access to/provisioning of care, and technology – all of which intersect at many points. Health care executives -- whether payers, providers, regulators, or vendors – must understand these interrelationships, and how they continue to evolve, so as to proactively address them in their respective organizations in order to remain competitive.

This blog will discuss some key interrelationships among the aforementioned components and tease out some of the complexities inherent in the dependencies and co-dependences in the health care system and their effect on health care organizations.

The Three-Legged Stool:

One way to examine the health care system in the United States is through the interrelationship and interdependence among access to care, quality of care, and cost of care. If either access, quality, or cost is removed, the relationship (i.e., the stool) collapses. Let's examine each component.

Access to health care comprises several factors including having the ability to pay (e.g., through insurance and/or out of pocket) a health care facility that meets the health care need of the patient, transportation to and from that facility, and whatever post discharge orders must be filled (e.g., rehabilitation, pharmacy, etc.).

Quality of care includes, but is not limited to, a health care facility or physician's office that employs medical people with the skills to effectively diagnose and treat the specific health care condition realistically and satisfactorily. This means without error, without causing harm to the patient, and/or requiring the patient to make copious visits because the clinical talent is unable to correctly diagnose and treat the condition.

Lastly, cost of care comprises multiple sources including: